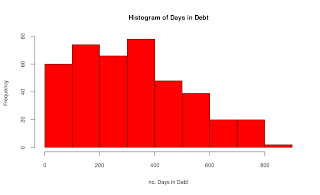

Like so many data sets there are some data clean up challenges. The first is the use of excel, which is not a problem, but the authors decided to add considerable headers, and unusual formats to the summary tables. Here is my attempt to get one of the first data files cleaned up and working. One big problem is changing $1,000.00 into 1000.00, I have some code below, but would appreciate any help in making my code better. Below are the summary graphs of the data:

The basic graphs are pretty self explanatory, for fun I did a regression to see if there is any correlation, the first regression was okay, but i noticed it could use a semi-log transformation. After taking the log of the average daily balance, i got a much better looking regression as well as r^2.

Below is my R code:

#Fed Data library(stringr) fed.01<-read.csv(file.choose(), header=T) summary(fed.01) #Cleaning up the Data- removed the $ sign and the ',' in 1,000 average<-str_sub(fed.01$Average.Daily.Balance..in.Millions.of.U.S..Dollars., start=2, end=-1) average<-as.numeric(gsub(",", '', average)) fed.02<-cbind(average, fed.01) #Exploritory Graphs hist(fed.02$Days.in.Debt, main='Histogram of Days in Debt', col='red', xlab='no. Days in Debt') years.debt<-fed.02$Days.in.Debt/365 hist(years.debt, main='Histogram of Years in Debt', col='red', xlab='no. Years in Debt', breaks=15) #Bar graphs the the data #The country of origin par(las=2, mar=c(5,12,4,2), mfrow=c(1,1)) country<-sort(table(fed.01$Country)) barplot(country, main='Nation of Banks', col='blue', horiz=TRUE) #Type of Bank or Industry par(las=2, mar=c(5,17,4,2), mfrow=c(1,1)) industry<-sort(table(fed.01$Industry)) barplot(industry, main='Type of Industry', col='blue', horiz=TRUE) #Organizations with average balances greater than $5 billion five.bill<-subset(fed.02, average>5000) par(las=2, mar=c(5,19,4,2), mfrow=c(1,1)) barplot(sort(five.bill$average),names.arg=five.bill$Company, main='Companies With Average Daily Balance Greater than $5 Billion', col='blue', hor=TRUE) #Organizations with debt more then 730 days (2 years) year.comp<-subset(fed.02, Days.in.Debt>730) par(las=1, mar=c(5,20,4,2)) barplot(sort(year.comp$Days.in.Debt), names.arg=year.comp$Company, main='Companies With Days of Debt Greater than 730 Days (2 Years) days', col='red', hor=TRUE, xpd=FALSE, xlim=c(720, 830)) par(las=0, mar=c(5,4,4,2)) #Regression of Days in Debt to Ave. Daily Balance #ploted the data, the r2 is poor, and the slop is positive, #nothing to get too excited about, took the log plot(fed.02$Days.in.Debt, fed.02$average, xlab='Days in Debt', ylab='Ave. Daily Balance', main='Scatter Plot: Daily Balance and Days in Debt') lm.01<-lm(fed.02$average~fed.02$Days.in.Debt) abline(lm.01) summary(lm.01) #log of fed$average to reduce the outliers log.aver<-log(fed.02$average) plot(fed.02$Days.in.Debt, log.aver, xlab='Days in Debt', ylab='Log of Ave. Daily Balance', main='Scatter Plot: Log Daily Balance and Days in Debt') lm.02<-lm(log.aver~fed.02$Days.in.Debt) abline(lm.02) summary(lm.02)